Background of research at Covariant

Covariant was founded on research that fundamentally changed the world of AI. Today we continue to perform active research on new and novel approaches that we apply to solve the challenges that our robotic systems see in warehouses and fulfillment centers around the world. What normally can take 5-8 years to make it from the lab to the real world, we do in a matter of weeks and months.

While most of our R&D efforts are kept private, giving us a leading edge in providing autonomous capabilities, we have decided to also start sharing a small fraction of our advances for public consumption. In this post, we focus on a core advancement with great potential for wider impact and applicability well beyond warehouse pick-and-place applications.

This post will cover the core ideas behind our paper that was recently published and presented at the European Conference on Computer Vision (ECCV) in October 2022 in Tel Aviv. It was authored by myself (Andrew Liu), Nikhil Mishra, Maximilian Sieb, Yide (Fred) Shentu, Pieter Abbeel, and Peter Chen. It highlights work that has been essential in the development of our approach as the ability to deal with uncertainty is key to robust operations.

Bounding boxes abound

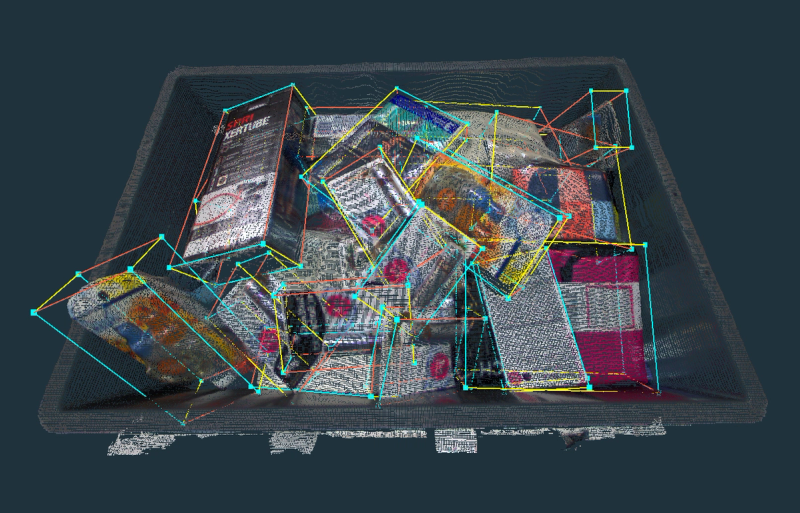

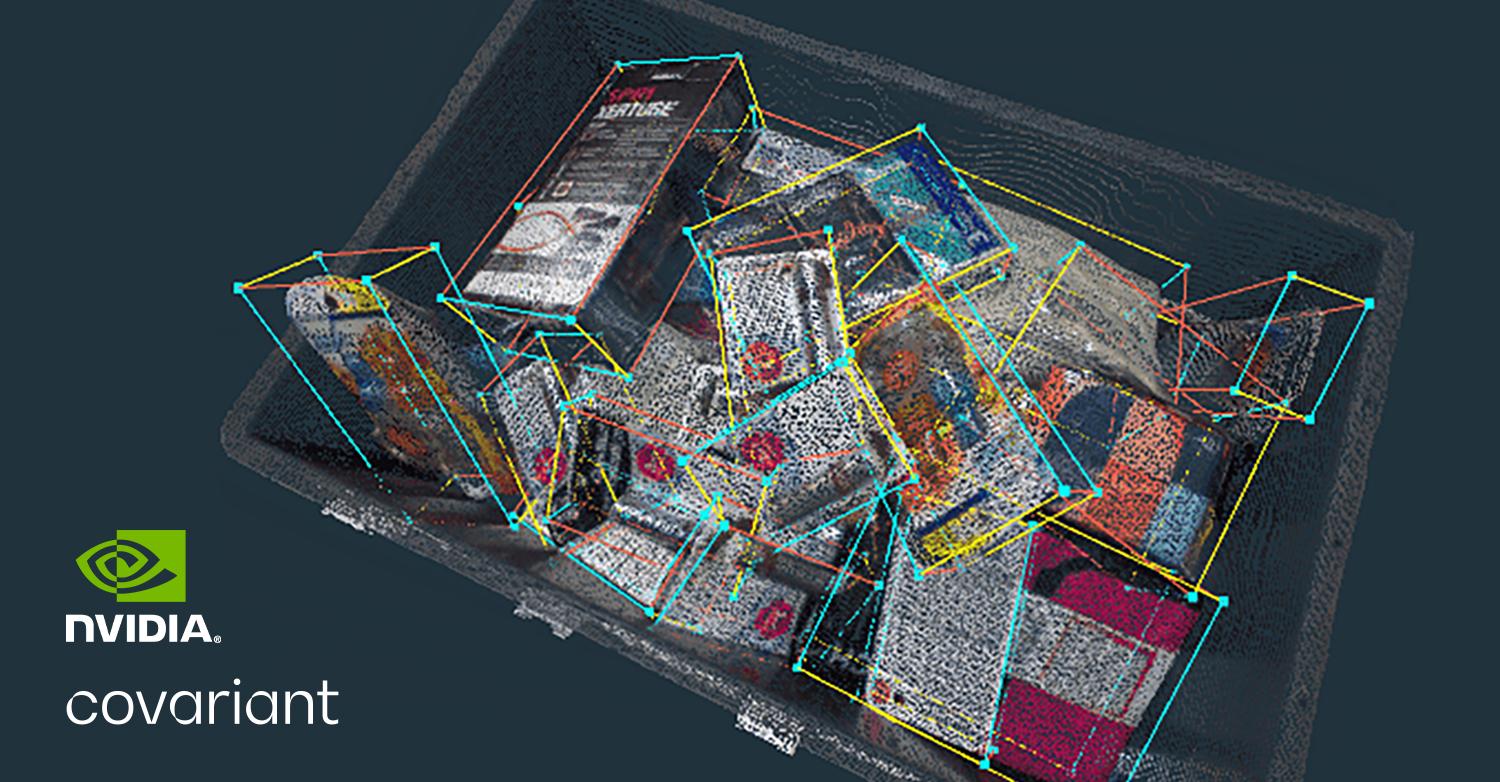

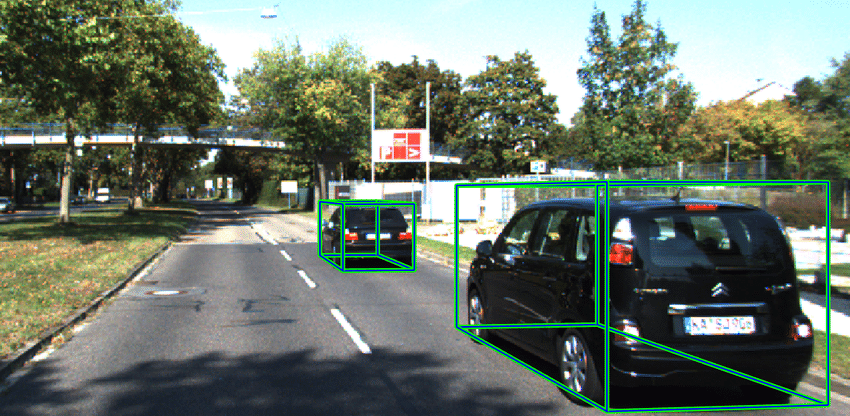

When it comes to dimensioning or trying to understand the shape or boundaries of an object based on images, generating 3D bounding boxes is a common approach. A simple example of this would be to imagine a box around an object, such as a bottle or cup, where the object entirely fits into the box with no part of it protruding. The reason for using bounding boxes is that they provide a reasonable idea of the physical limits of an object. You might use this, for example, in robotic piece-picking, to ensure the robot arm accounts for the physical clearance needed to pick, move, and place objects.

Classical AI prediction without confidence is not enough

To generate 3D bounding boxes based on color point clouds, AI (specifically, neural networks) has often been used, especially in academic research. One example of this is having a neural network provide a prediction of the size (length, width, and height) of a bounding box. However, in many real-world scenarios, objects may be occluded or tightly packed and not fully visible, making size prediction challenging.

Traditional neural networks (NN) generate a single answer or prediction — but the NN does not know the confidence of its bounding box prediction. The NN can be confident that the object exists but make a completely wrong estimation of the dimensions of the bounding box.

In the world of piece-picking robotic automation in warehouses, such a single prediction of unknown confidence level is not sufficient — and in fact unacceptable. This is because if a robot were to act based on a low-confidence prediction without being aware of the higher possibility of error, it could result in collisions that might cause irreparable damage.

A new method: Knowing the unknowns

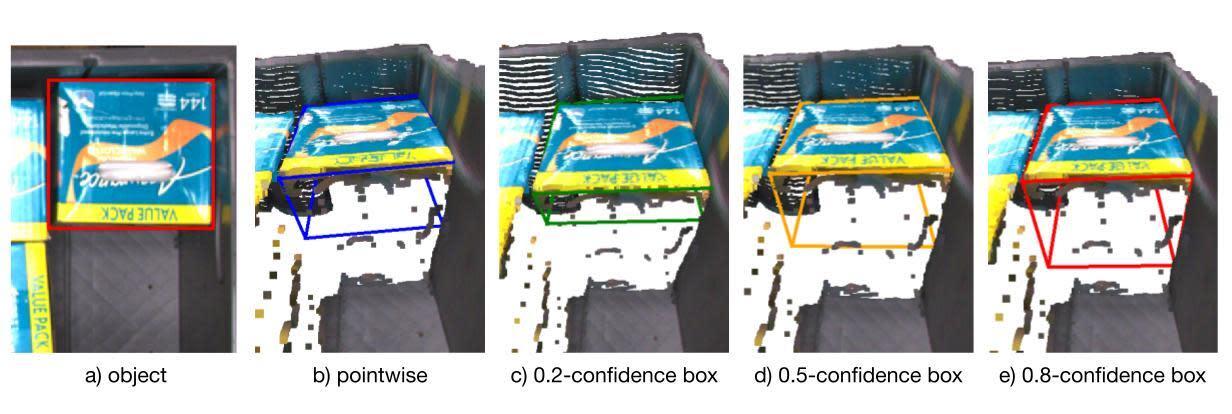

To address this problem, Covariant’s AI research team developed a better approach to AI-generated 3D bounding boxes. We applied a technique that is common elsewhere but had not been used for generating bounding boxes: autoregressive probabilistic modeling.

The essence of what an autoregressive model does is provide multiple predictions that capture the whole range of possibilities, which we can then interpret with a level of confidence. It does this not for just one dimension (e.g. height), but rather for all the dimensions (e.g. height, length, width).

So now when we generate a bounding box for an object, we also know how certain or uncertain we are about the dimensions of that box. By expressing this uncertainty, we give our robots the ability to reason with it in the real world — enabled by AI that knows what it doesn’t know.

Driving better robot performance in the real world

Covariant robots operate in warehouses and fulfillment centers where they pick items from chaotic and unstructured scenarios often. For example, a tote or bin might be filled with a variety of SKUs and items, all overlapping each other. Then the robots place these items onto various types of destinations, from small cubbies to stacked pallets. In each of these cases, it is imperative that the robots understand the size and shape of the objects it is handling to avoid collisions and place them with precision.

The traditional method for generating bounding boxes for these objects is not acceptable — and dangerous — because we don’t want robots to act based on uncertain dimension data. The new method our AI researchers developed gives robots the ability to adapt their movements by taking into account the level of confidence they have about the dimensions of objects they are handling. So if the AI model generates a bounding box with low confidence, the robots know to provide extra clearance to avoid collisions. This results in our robots performing with higher accuracy in the real world.

Continuous improvement driven by cutting-edge research

The AI research team at Covariant continues to push boundaries and invent new approaches to better solve challenges we see in the real-world application of piece-picking robotic automation in warehouses. Driven by the desire to make a real impact in the world, we continually evolve our methodologies.